08 Nov Ways to measure Quality of Experience (QoE)

Quality is the degree of excellence of something. Within the networked media ecosystem, there are many approaches to provide a term for “quality”. However, in the majority of the digital multimedia entertainment applications, the main and real interest is focused on the overall QoE (Quality of Experience) perceived by the final end user. QoE represents how good a video looks, how good an audio sounds, how good a combined audiovisual content is perceived or how well interactivity works with a specific audiovisual service.

Audiovisual services are intended for people. Nowadays, the audiovisual content traffic through the Internet as well as the number of television channels that have appeared in recent years are enormous, without forgetting audiovisual platforms and OTT (Over the Top) services which offer a large catalog of series, movies and documentaries, and whose business model (in most of these last cases) is conditioned to a monthly subscription. Therefore, there is a greater expectation on the part of the final end users regarding the quality of these contents and it implies the success or non-success of a certain service.

How to measure the quality of an audiovisual content is not easy, mainly because it is conditioned not only by an objective measurement but also a subjective assessment, and this last one is very difficult to determine. In any audiovisual chain, video and audio are degraded during the acquisition, compression, transmission, processing and visualization. The distortions directly affect the final quality perceived by the end users. Typical degradations, in the case of the video, are contrast or colour issues due to the nature of the scene, blurring, blocking, loss of bitrate caused by the coding, loss of packets and latency in the transmission of the content, among others.

In terms of QoE, understanding the quality of experience as a metric that subjectively quantifies the degree of satisfaction of a final user with a specific audiovisual service, there are two ways to measure it : subjective quality methods or objective quality methods.[/vc_column_text]

Subjective video quality assessment methods are able to measure the video quality perceived by the Human Visual System (HVS) and are more accurate than the objective assessment methods. A priori, it is the best way to determine the quality because it takes into account not only the audiovisual signal, but also other important aspects such as the expectations and feelings of the users.

The main problem of this type of assessment methods is that the preparation of the test, the execution and the analysis of the results are very tedious. Moreover, it is necessary to have a large number of participants. In addition, for the results to be accepted, it is mandatory to follow some methodologies described in international standards (such as ITU-R BT.500 Methodology for the subjective assessment of the quality of television pictures, ITU-T P.910 Subjective video quality assessment methods for multimedia applications, and ITU-T P.913 Methods for the subjective assessment of video quality, audio, quality, and audiovisual quality of Internet video and distribution quality television in any environment) that define, among other aspects, the conditions of the test environment (lighting, viewing distance, monitor configuration), the duration of the sessions, the materials and the way of presenting the contents, the methods and scales of evaluation, and the metrics for analysing the results.

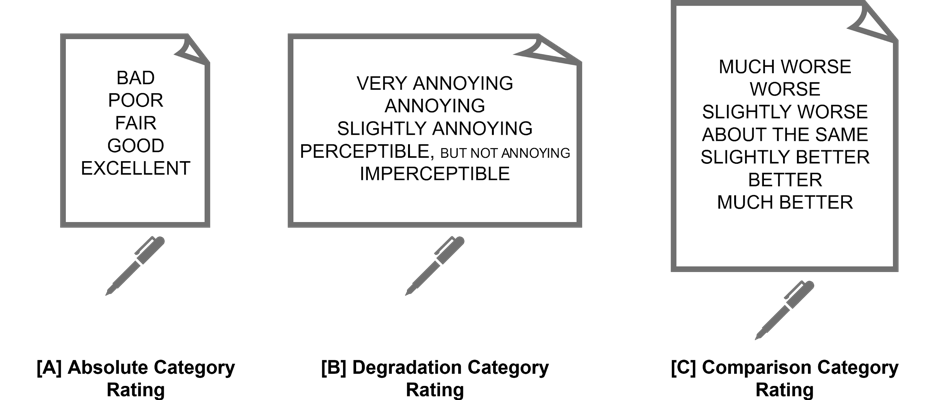

Typically, there are three most used methodologies in terms of content presentation: simple stimulus, double stimulus and triple stimulus. In simple stimulus, only the test content to be evaluated is presented. In double stimulus both the test content and the reference content (without any degradation) are presented. In triple stimulus, the reference is presented at first and then (and in random order) the test content and the reference content. For evaluating the content, examples of common scales are: Absolute Category Rating (ACR), Degradation Category Rating (DCR) or Comparison Category Rating (CCR).

On the other hand, the objective quality methods use different algorithmic implementations with the aim of providing automatic estimations of the QoE perceived by the end users, predicting the effects of transmission impairments on the video quality (for example, distortion, luminance, contrast, noise, blurring, frame freezing or frame skipping). These implementations have been classified into different categories depending on the type of input data: the audiovisual signal, the packet header information, the network and terminal devices, bitstream information or hybrid models, the last one combining two or more models.

Independently of the above classification, there are three categories of video quality assessment (VQA) depending on the information and the content required for the evaluation: Full Reference (FR) where the original content (a reference) is needed, Reduced Reference (RR) where some parameters of the original content (but not the original content itself) are required, and No Reference (NR) where the original content is not needed.

Reduced Reference assessments use features from the reference content while avoiding the use of the whole reference signal. Information such as spatial information, temporal information and coding parameters are commonly used.

For No Reference assessments, only the degraded audiovisual content is required. These type of assessments try to seek artifacts and other degradations. They are more complex than the previous methods and very interesting because it is not necessary to have the original content.

Different metrics are calculated on the degraded content (freeze detection, black frames, loss of bitrate, pixel information). Then, there is a combination of these measurements, and a QoE value is estimated. Perceptions models of the HSV can be incorporated as sensitivity thresholds.

End of Document.

Enter your email address to receive a download link with the article.

No Comments