30 May The relevance of loudness in streaming services in media industry: why it matters?

So far in the blog we have focused on some of the issues affecting images in streaming services. But if these companies want to deliver a great user experience, they must not neglect the sound of their productions.

Sometimes mastering is the last step in audio production. When we refer to mastering, we are talking about the process of putting the finishing touches on a file by enhancing the overall sound. It allows to create consistency across all the episodes, the film, or the documentary, and prepare it for online distribution.

When we apply mastering in a file, we take a mix and put the final touches on it by elevating certain audio characteristics. It usually affects aspects like adjusting levels, applying stereo enhancement, and tone. It is said, anything that could distract the listener from the audio. The result must ensure a polished, optimal and clean sound that is optimized for consistent playback across different formats and systems.

Given this overview, mastering is a necessary step to create that “finished” sound that companies want to be heard by the customer. In cases where a project contains more than a single track, mastering engineers not only work to improve each individual track but also to establish a consistent listening experience across an entire season.

Loudness normalization

Part of this mastering is loudness normalization. Its goal is not to force, or even encourage, mastering engineers to work toward a specific level. Loudness normalization is an action taken for the benefit of the end-user. Thanks to this type of normalization of the audio, an end-user listening to different type of material from a variety of sources (e.g., a series, a film, etc.) don’t have to constantly reach to adjust their volume control.

That’s improve the quality of user experience and that is one of the main reasons why most streaming and broadcasting platforms are using it. In fact, these media companies should ask content providers to meet some technical requirements. However, it is important to do loudness normalization in an appropriate way, to avoid distortion during the encode and decode process.

Since some years ago, streaming services have used loudness normalization to manage the playback level of the files on their platforms.

Loudness normalization in streaming is important because it allows users to play any file, no matter when it was produced. For example, music and films have been mastered in a lot of decades and, sometimes, these documents have levels of loudness. As such, streaming platforms apply gain adjustments so that multimedia play back at a consistent level. That way, listeners don’t have to adjust the volume at the beginning of every piece of content if its mastering was done with some decades of differences from the previous file watched by the user.

LUFS (Loudness Units Full Scale)

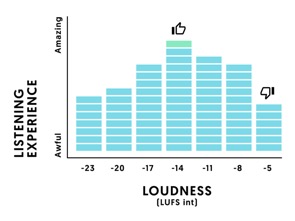

One of the best mastering levels for streaming is an integrated -14 LUFS or -23 LUFS in broadcasting television. The reason is because it fits the loudness normalization settings of most streaming services. Although other measurements like the true peak value and other metrics need to be considered, LUFS is considered one of the best mastering levels when addressing loudness.

We must remember that LUFS is a way of measuring the level and loudness of audio, incorporating human listening patterns that correlate better with reality than other types of measurements. LUFS is the acronym for Loudness Units Full Scale or Loudness Units Relative to Full Scale. This is a research development on behalf of the EBU or European Broadcasting Union, the entity that governs radio and television broadcasting in Europe.

The development investigated the way in which a significant sample of individuals perceive loudness to be able to translate it more accurately.

Master loudness

Loudness normalization does not affect the dynamic range of a recording. It only turns your master up or down according to a set benchmark loudness. Having a file turned up by loudness normalization or down by loudness normalization only changes the relative volume at which all those moments occur.

Anyway, it is important to notice that mastering to a LUFS isn’t the only metric we need to pay attention to. Although by mastering to LUFS, we have a good mid-ground so to speak for multiple streaming services, it is important how we integrated loudness relates to our dynamic range, as making a highly dynamic master louder through normalization can result in peaking. Understanding how encoding affects your master’s level needs to be paid attention to as well.

The relevance of EBU R 128

Another key loudness normalization standard is EBU R 128, which gives to broadcasters some clues to measure and normalize audio using Loudness meters instead of Peak Meters (PPMs) only. It is promoted by EBU European Broadcasting Unit (Operating Eurovision and Euroradio).

EBU R 128 comes after several years of intense work by the audio experts in the EBU. The recommendation is accompanied by supplements giving specific guidance on the different broadcast aspects (like short-form content or streaming).

EBU R-128 recommends, when a digital signal is converted to an analog signal, to measure an estimation of the real peaks to avoid clipping and distortion. This is accomplished by over-sampling the signal 4 times and retaining the peak values. Content whose integrated loudness is above the recommended level will be lowered to bring it back to the -23LUFS level.

No Comments